Listen to Your Gut

Ali Momeni (2015)We witnessed a notable rise in research and commercial interest in the human gut, including the enteric nervous system and the gut microbiota. The gut–sometimes referred to as the second brain–is touted for its crucial role in regulating human physiology, as well as its influence on a wide range of diseases, many of which afflict significant portions of our population. Over the past year, an interdisciplinary team from Carnegie Mellon University have been conducting an experiment to study the mind-gut connection based on analysis of auditory signals from the human gut. Initial results from our human-subject trial reveal strong evidence that the mind-gut connection can be effectively studied through audio analysis of bowel-movement sounds. We envision a connected device (IoT) that functions as an accessory to a mobile phone; the device would performs on-going audio recordings of the gut, push the recordings to the cloud for analysis, use experience-sampling techniques to label data, and provide opportunities for experimentation with biofeedback as a method for helping users develop and implicit and explicit understanding of their guts function.

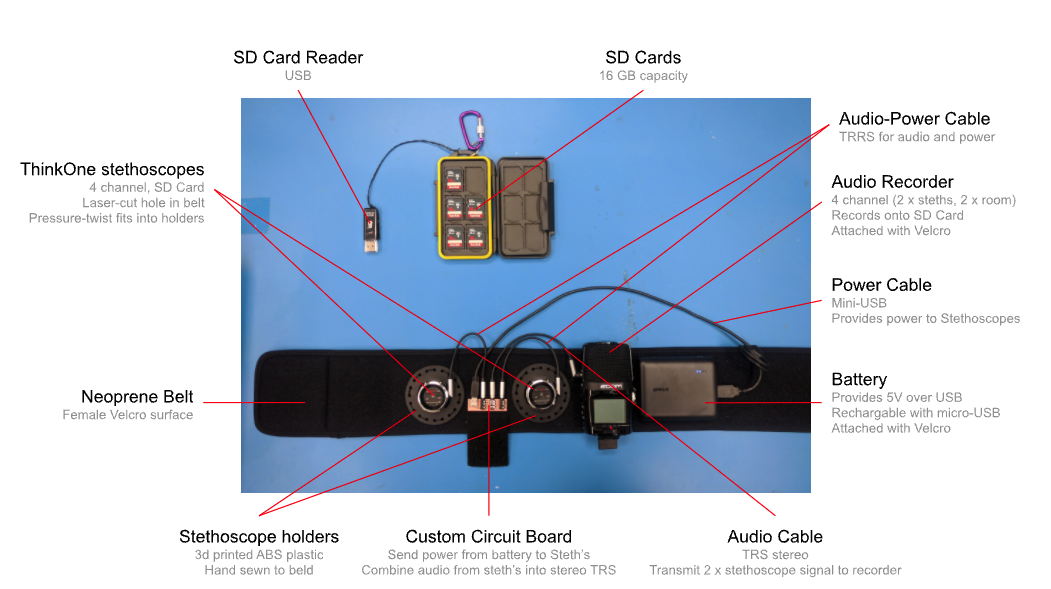

We have completed an experiment that provided us with high-quality audio recordings of our 100 subjects bowel-movement sounds, while they were placed in six different states: baseline (seated, resting), disgust (video stimulus), anxiety (simulated public speaking task), concentration (a video game), frustration (a broken video game) and digestion (post eating). Using a custom non-invasive device, we captured of high-quality audio signals of the gut and analyzed the recordings using a range of statistical and machine learning techniques. Our analysis results are remarkable and promising:

- With 5-minutes of analyzed audio from each state for a subject, we are able to classify a new 1-minute segment of audio from the same subject with extremely high accuracy (>%95).

- With 10-30 minutes of analyzed audio from each state for a small group of subjects (20), as well as 10-minutes of baseline audio from a new subject, we are able to classify a new 1-minute segment from this new subject with good accuracy (~%65); limiting the states to those with more distinct signatures (baseline, concentration, digestion, disgust), the classification success rises (~%82).

We envision future applications of our device and analysis system to span across diagnosis, treatment and research. We identify potential for significant impact in two initial areas:

Gastroenterology: Within the healthcare system, we have identified Irritable Bowel Syndrome (IBS), Obstruction, and Postoperative ileus and three pervasive GI conditions that afflict millions, and have a high likelihood of diagnosis through audio analysis. In addition to providing medical professionals with new means of diagnosing these conditions, we envision opportunities significant cost-savings within medical systems through better diagnosis of these conditions.

Mind-Gut Connection: Within the consumer space, we envision a device analogous to the popular “Fit Bit”, but for the gut. This device would combine a wearable audio capture device with cloud-based analysis system to significantly ramp up our ability to collect data, experiment with applications of biofeedback for helping users better understand their mind-gut connection, improve our learning algorithms and work towards enhanced diagnostic and predictive capabilities

This project is sponsored by microgrant #2014-062 from the Frank-Ratchye Fund for Art @ the Frontier, the Center for Machine Learning and Health (CMLH) and the University of Pittsburgh Medical Complex (UPMC).

Team:

George Loewenstein (CMU Social and Decision Science, Co-Principal Investigator)

Ali Momeni (CMU Art; Co-Principal Investigator)

Max G’Sell (CMU Statistics, Co-Investigator)

Rich Stern (CMU ECE, Co-Investigator)

Peter Elliot (CMU Statistics, PhD Candidate)

Kunjoon Byun (CMU, Project Manager)